In this article, you’ll learn how to use some scraping and Google’s Knowledge Graph to do automated prompt engineering that generates an outline and summary for an article that, if well written, will contain many key ingredients to rank well.

At the root of things, we’re telling GPT-4 to produce an article outline based on a keyword and the top entities they have found on a well-ranking page of your choice.

The entities are ordered by their salience score.

“Why salience score?” you might ask.

Google describes salience in their API docs as:

“The salience score for an entity provides information about the importance or centrality of that entity to the entire document text. Scores closer to 0 are less salient, while scores closer to 1.0 are highly salient.”

Seems a pretty good metric to use to influence which entities should exist in a piece of content you might want to write, doesn’t it?

Getting started

There are two ways you can go about this:

- Spend about 5 minutes (maybe 10 if you need to set up your computer) and run the scripts from your machine, or…

- Jump to the Colab I created and start playing around right away.

I’m partial to the first, but I’ve also jumped to a Colab or two in my day.

Assuming you’re still here and want to get this set up on your own machine but don’t yet have Python installed or an IDE (Integrated Development Environment), I will direct you first to a quick read on setting your machine up to use Jupyter Notebook. It shouldn’t take more than about 5 minutes.

Now, it’s time to get going!

Using Google entities and GPT-4 to create article outlines

To make this easy to follow along, I’m going to format the directions as follows:

- Step: A brief description of the step we’re on.

- Code: The code to complete that step.

- Explanation: A short explanation of what the code is doing.

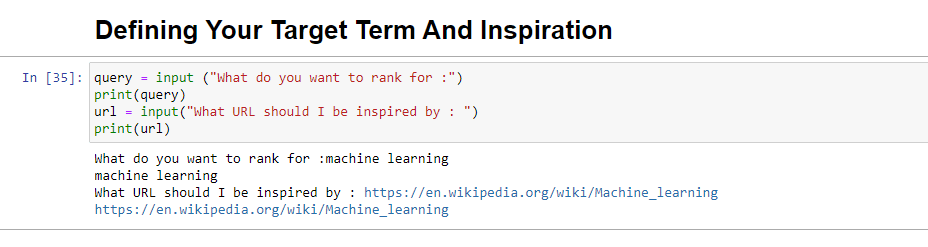

Step 1: Tell me what you want

Before we dive into creating the outlines, we need to define what we want.

query = input ("What do you want to rank for :")

print(query)

url = input("What URL should I be inspired by : ")

print(url)When run, this block will prompt the user (probably you) to enter the query you’d like the article to rank for/be about, as well as give you a place to put in the URL of an article you’d like your piece to be inspired by.

I’d suggest an article that ranks well, is in a format that will work for your site, and that you think is well-deserving of the rankings by the article’s value alone and not just the strength of the site.

When run, it will look like:

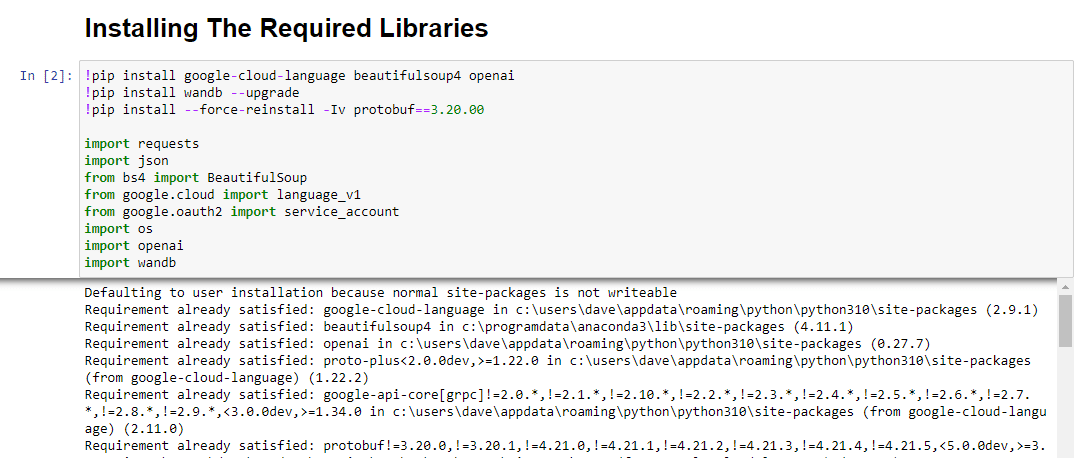

Step 2: Installing the required libraries

Next, we must install all the libraries we will use to make the magic happen.

!pip install google-cloud-language beautifulsoup4 openai

!pip install wandb --upgrade

!pip install --force-reinstall -Iv protobuf==3.20.00

import requests

import json

from bs4 import BeautifulSoup

from google.cloud import language_v1

from google.oauth2 import service_account

import os

import openai

import pandas as pd

import wandbWe’re installing the following libraries:

- Requests: This library allows making HTTP requests to retrieve content from websites or web APIs.

- JSON: It provides functions to work with JSON data, including parsing JSON strings into Python objects and serializing Python objects into JSON strings.

- BeautifulSoup: This library is used for web scraping purposes. It helps in parsing and navigating HTML or XML documents and extracting relevant information from them.

- Google.cloud.language_v1: It is a library from Google Cloud that provides natural language processing capabilities. It allows for the performing various tasks like sentiment analysis, entity recognition, and syntax analysis on text data.

- Google.oauth2.service_account: This library is part of the Google OAuth2 Python package. It provides support for authenticating with Google APIs using a service account, which is a way to grant limited access to the resources of a Google Cloud project.

- OS: This library provides a way to interact with the operating system. It allows accessing various functionalities like file operations, environment variables, and process management.

- OpenAI: This library is the OpenAI Python package. It provides an interface to interact with OpenAI’s language models, including GPT-4 (and 3). It allows developers to generate text, perform text completions, and more.

- Pandas: It is a powerful library for data manipulation and analysis. It provides data structures and functions to efficiently handle and analyze structured data, such as tables or CSV files.

- WandB: This library stands for “Weights & Biases” and is a tool for experiment tracking and visualization. It helps log and visualize the metrics, hyperparameters, and other important aspects of machine learning experiments.

When run, it looks like this:

Step 3: Authentication

I’m going to have to sidetrack us for a moment to head off and get our authentication in place. We will need an OpenAI API key and Google Knowledge Graph Search credentials.

This will only take a few minutes.

Getting your OpenAI API

At present, you likely need to join the waitlist. I'm lucky to have access to the API early, and so I am writing this to help you get set up as soon as you get it.

The signup images are from GPT-3 and will be updated for GPT-4 once the flow is available to all.

Before you can use GPT-4, you'll need an API key to access it.

To get one, simply head over to OpenAI's product page, and click Get started.

Choose your signup method (I chose Google) and run through the verification process. You'll need access to a phone that can receive texts for this step.

Once that's complete, you'll create an API key. This is so OpenAI can connect your scripts to your account.

They must know who's doing what and determine if and how much they should charge you for what you're doing.

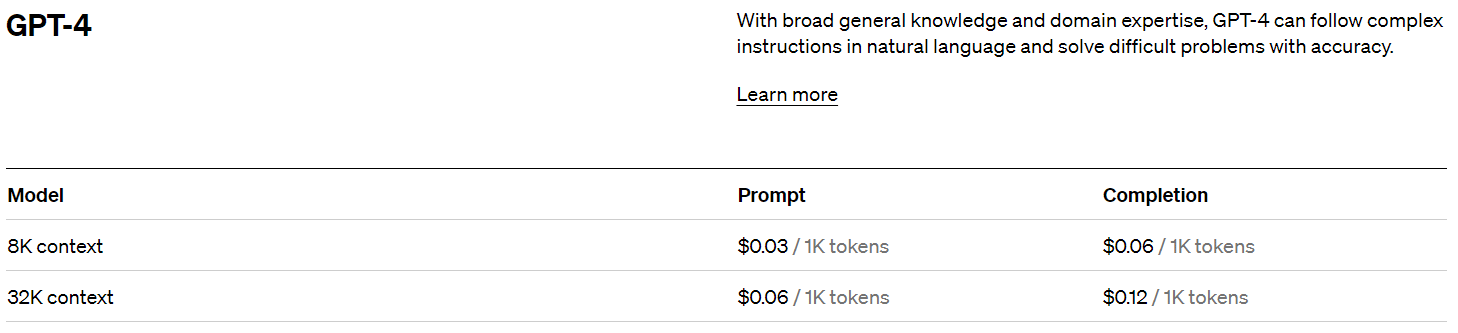

OpenAI pricing

Upon signing up, you get a $5 credit which will get you surprisingly far if you're just experimenting.

As of this writing, the pricing past that is:

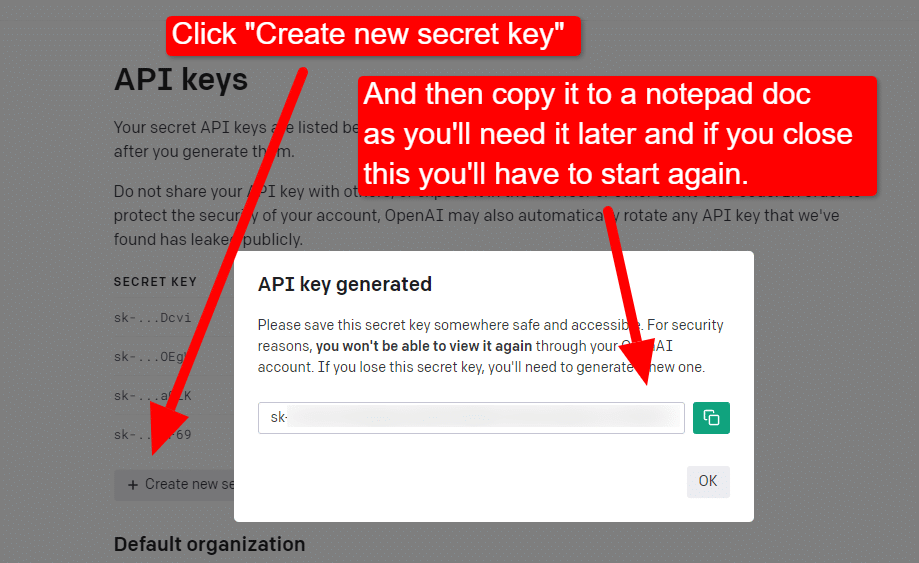

Creating your OpenAI key

To create your key, click on your profile in the top right and choose View API keys.

...and then you'll create your key.

Once you close the lightbox, you can't view your key and will have to recreate it, so for this project, simply copy it to a Notepad doc to use shortly.

Note: Don't save your key (a Notepad doc on your desktop is not highly secure). Once you've used it momentarily, close the Notepad doc without saving it.

Getting your Google Cloud authentication

First, you’ll need to log in to your Google account. (You're on an SEO site, so I assume you have one.  )

)

Once you’ve done that, you can review the Knowledge Graph API info if you feel so inclined or jump right to the API Console and get going.

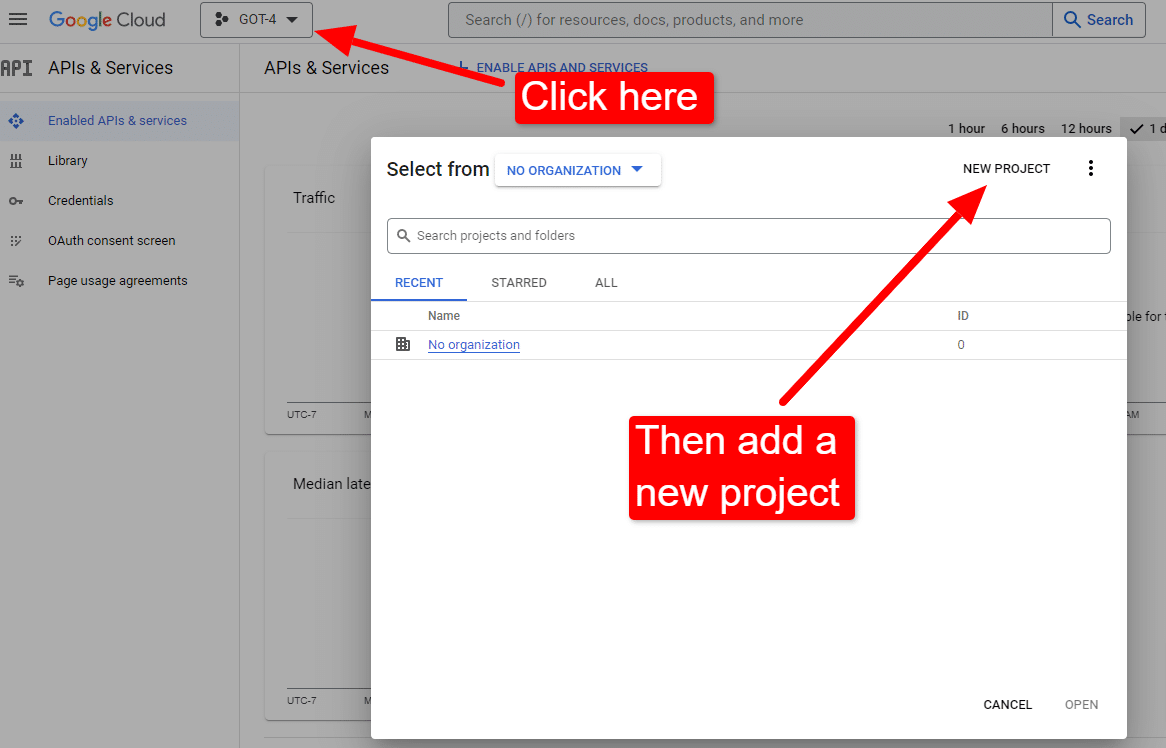

Once you’re at the console:

Name it something like “Dave’s Awesome Articles.” You know… easy to remember.

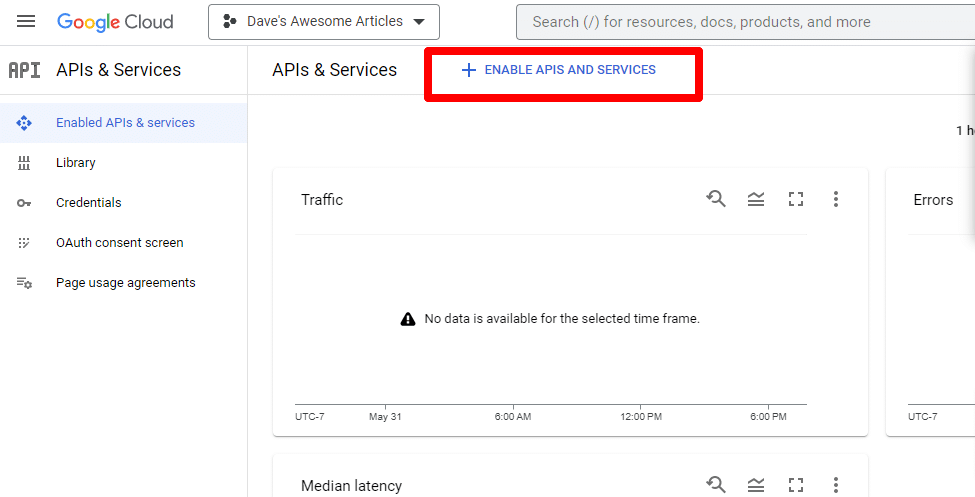

Next, you’ll enable the API by clicking Enable APIs and services.

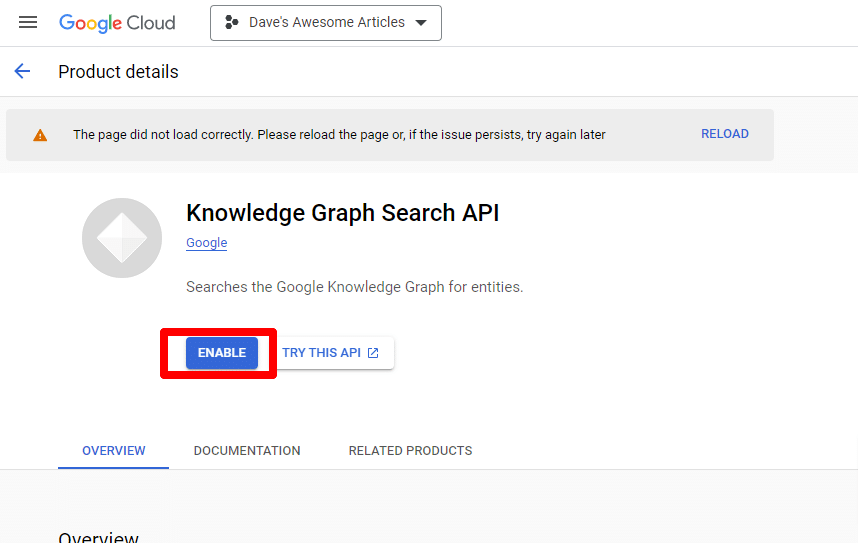

Find the Knowledge Graph Search API, and enable it.

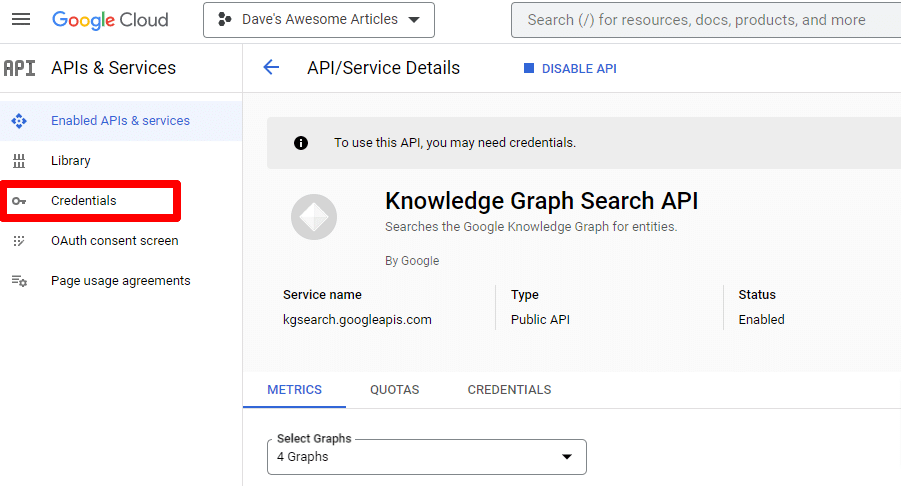

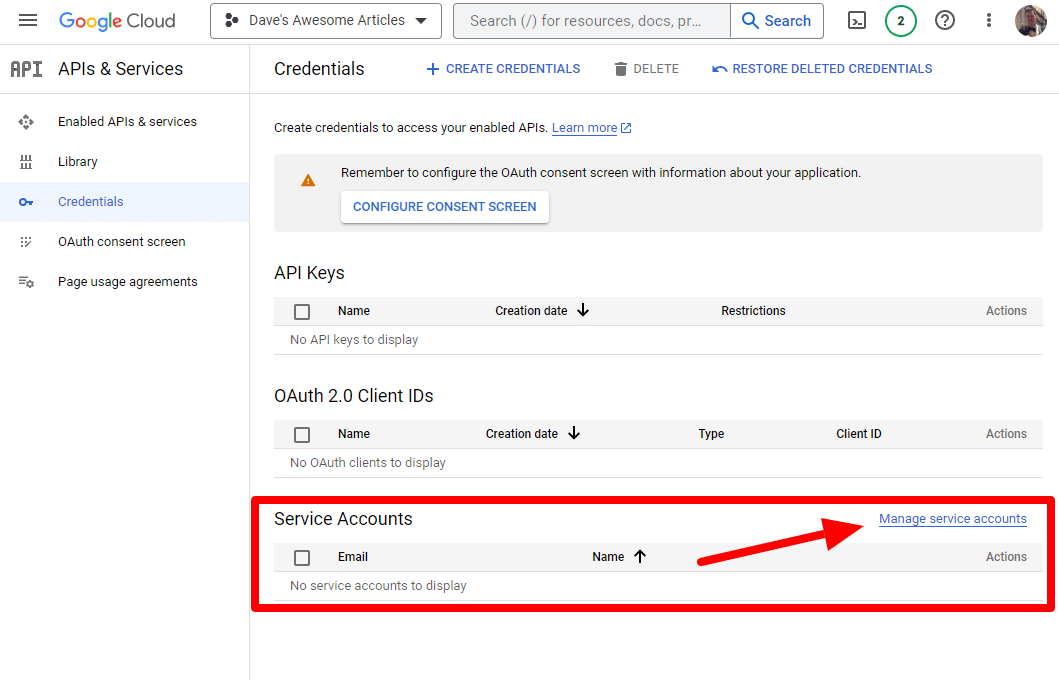

You’ll then be taken back to the main API page, where you can create credentials:

And we’ll be creating a service account.

Simply create a service account:

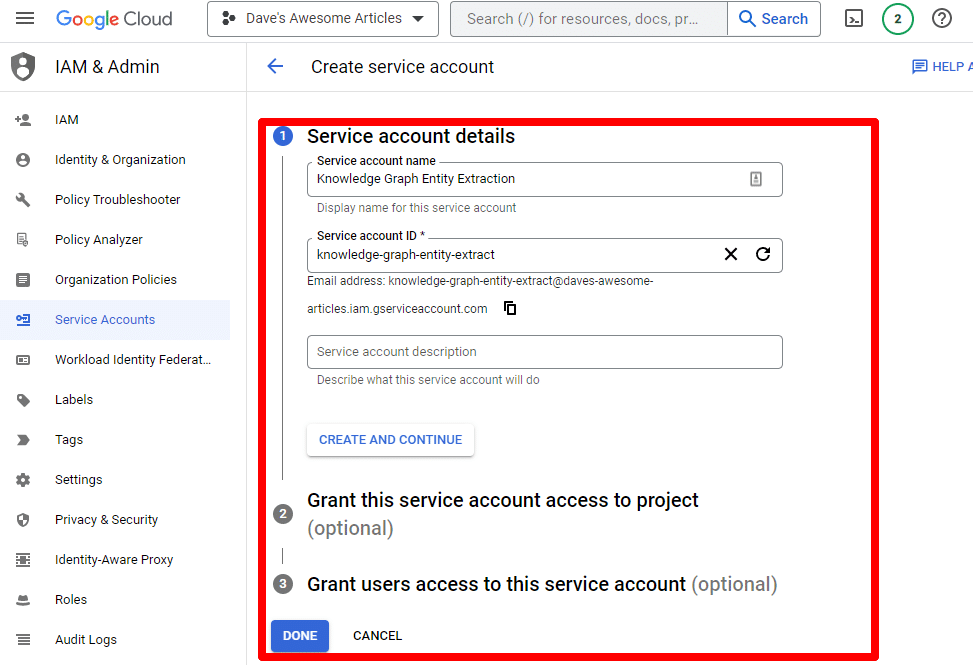

Fill in the required information:

(You’ll need to give it a name and grant it owner privileges.)

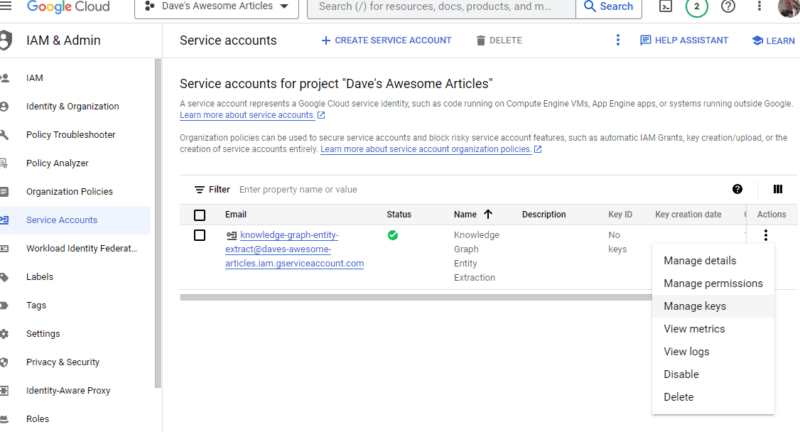

Now we have our service account. All that’s left is to create our key.

Click the three dots under Actions and click Manage keys.

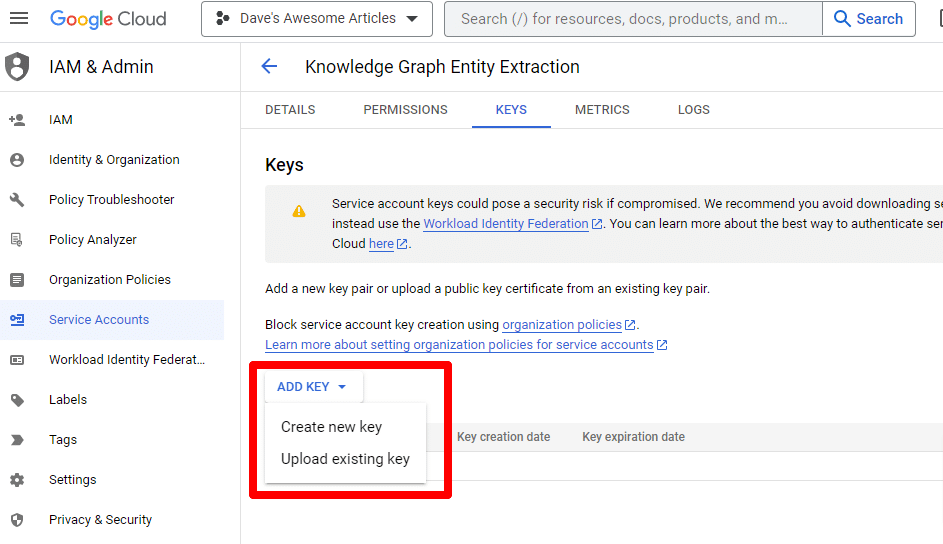

Click Add key then Create new key:

The key type will be JSON.

Immediately, you’ll see it download to your default download location.

This key will give access to your APIs, so keep it safe, just like your OpenAI API.

Alright… and we’re back. Ready to continue with our script?

Now that we have them, we need to define our API key and path to the downloaded file. The code to do this is:

os.environ['GOOGLE_APPLICATION_CREDENTIALS'] = '/PATH-TO-FILE/FILENAME.JSON'

%env OPENAI_API_KEY=YOUR_OPENAI_API_KEY

openai.api_key = os.environ.get("OPENAI_API_KEY")You will replace YOUR_OPENAI_API_KEY with your own key.

You will also replace /PATH-TO-FILE/FILENAME.JSON with the path to the service account key you just downloaded, including the file name.

Run the cell and you’re ready to move on.

Step 4: Create the functions

Next, we’ll create the functions to:

- Scrape the webpage we entered above.

- Analyze the content and extract the entities.

- Generate an article using GPT-4.

#The function to scrape the web page

def scrape_url(url):

response = requests.get(url)

soup = BeautifulSoup(response.content, "html.parser")

paragraphs = soup.find_all("p")

text = " ".join([p.get_text() for p in paragraphs])

return text#The function to pull and analyze the entities on the page using Google's Knowledge Graph API

def analyze_content(content):

client = language_v1.LanguageServiceClient()

response = client.analyze_entities(

request={"document": language_v1.Document(content=content, type_=language_v1.Document.Type.PLAIN_TEXT), "encoding_type": language_v1.EncodingType.UTF8}

)

top_entities = sorted(response.entities, key=lambda x: x.salience, reverse=True)[:10]

for entity in top_entities:

print(entity.name)

return top_entities#The function to generate the content

def generate_article(content):

openai.api_key = os.environ["OPENAI_API_KEY"]

response = openai.ChatCompletion.create(

messages = [{"role": "system", "content": "You are a highly skilled writer, and you want to produce articles that will appeal to users and rank well."},

{"role": "user", "content": content}],

model="gpt-4",

max_tokens=1500, #The maximum with GPT-3 is 4096 including the prompt

n=1, #How many results to produce per prompt

#best_of=1 #When n>1 completions can be run server-side and the "best" used

stop=None,

temperature=0.8 #A number between 0 and 2, where higher numbers add randomness

)

return response.choices[0].message.content.strip()This is pretty much exactly what the comments describe. We’re creating three functions for the purposes outlined above.

Keen eyes will notice:

messages = [{"role": "system", "content": "You are a highly skilled writer, and you want to produce articles that will appeal to users and rank well."},You can edit the content (You are a highly skilled writer, and you want to produce articles that will appeal to users and rank well.) and describe the role you want ChatGPT to take. You can also add tone (e.g., “You are a friendly writer …”).

Step 5: Scrape the URL and print the entities

Now we’re getting our hands dirty. It’s time to:

- Scrape the URL we entered above.

- Pull all the content that lives within paragraph tags.

- Run it through Google Knowledge Graph API.

- Output the entities for a quick preview.

Basically, you want to see anything at this stage. If you see nothing, check a different site.

content = scrape_url(url)

entities = analyze_content(content)You can see that line one calls the function that scrapes the URL we first entered. The second line analyzes the content to extract the entities and key metrics.

Part of the analyze_content function also prints a list of the entities found for quick reference and verification.

Step 6: Analyze the entities

When I first started playing around with the script, I started with 20 entities and quickly discovered that’s usually too many. But is the default (10) right?

To find out, we’ll write the data to W&B Tables for easy assessment. It’ll keep the data indefinitely for future evaluation.

First, you'll need to take about 30 seconds to sign up. (Don't worry, it’s free for this type of thing!) You can do so at https://wandb.ai/site.

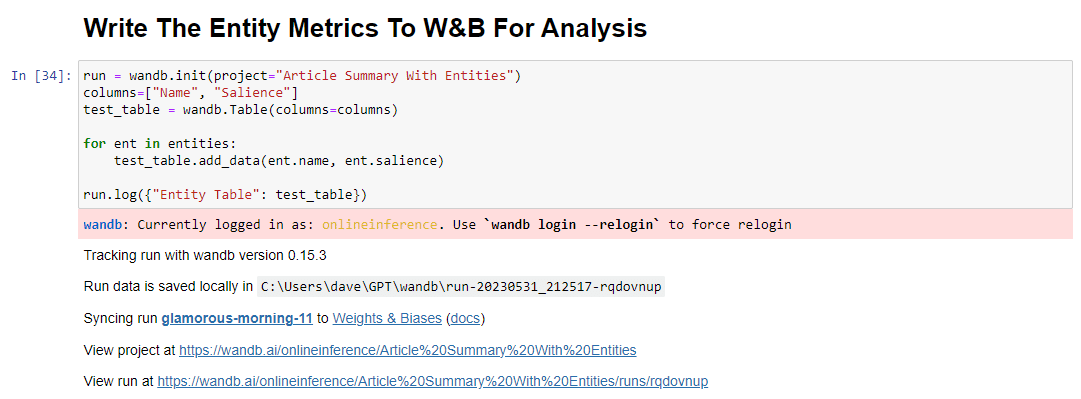

Once you’ve done that, the code to do this is:

run = wandb.init(project="Article Summary With Entities")

columns=["Name", "Salience"]

ent_table = wandb.Table(columns=columns)

for entity in entities:

ent_table.add_data(entity.name, entity.salience)

run.log({"Entity Table": ent_table})

wandb.finish()When run, the output looks like this:

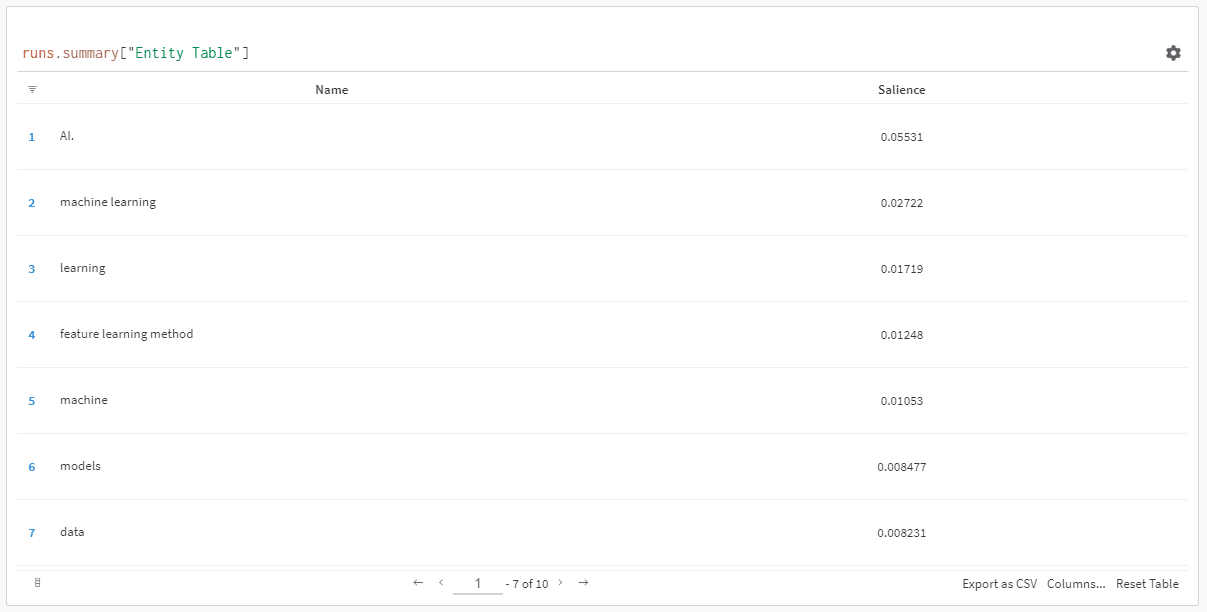

And when you click the link to view your run, you’ll find:

You can see a drop in salience score. Remember that this score calculates how important that term is to the page, not the query.

When reviewing this data, you can choose to adjust the number of entities based on salience, or just when you see irrelevant terms pop up.

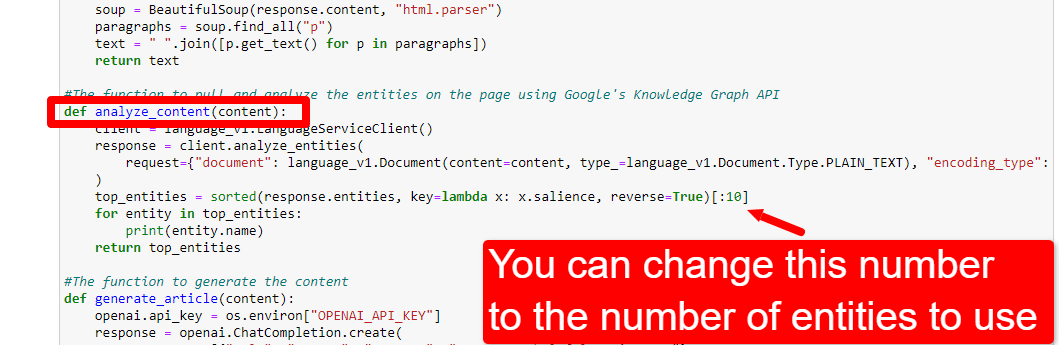

To adjust the number of entities, you’d head to the functions cell and edit:

You’ll then need to run the cell again and the one you ran to scrape and analyze the content to use the new entity count.

Step 7: Generate the article outline

The moment you've all been waiting for, it’s time to generate the article outline.

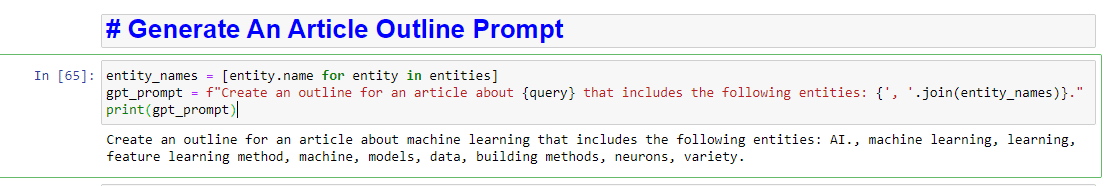

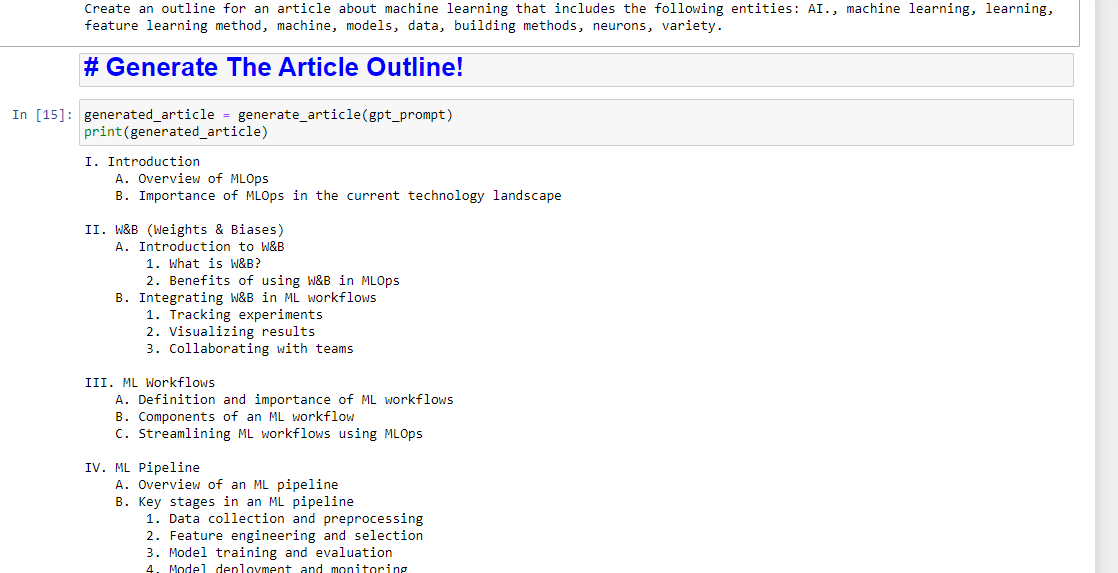

This is done in two parts. First, we need to generate the prompt by adding the cell:

entity_names = [entity.name for entity in entities]

gpt_prompt = f"Create an outline for an article about {query} that includes the following entities: {', '.join(entity_names)}."

print(gpt_prompt)This essentially creates a prompt to generate an article:

And then, all that’s left is to generate the article outline using the following:

generated_article = generate_article(gpt_prompt)

print(generated_article)Which will produce something like:

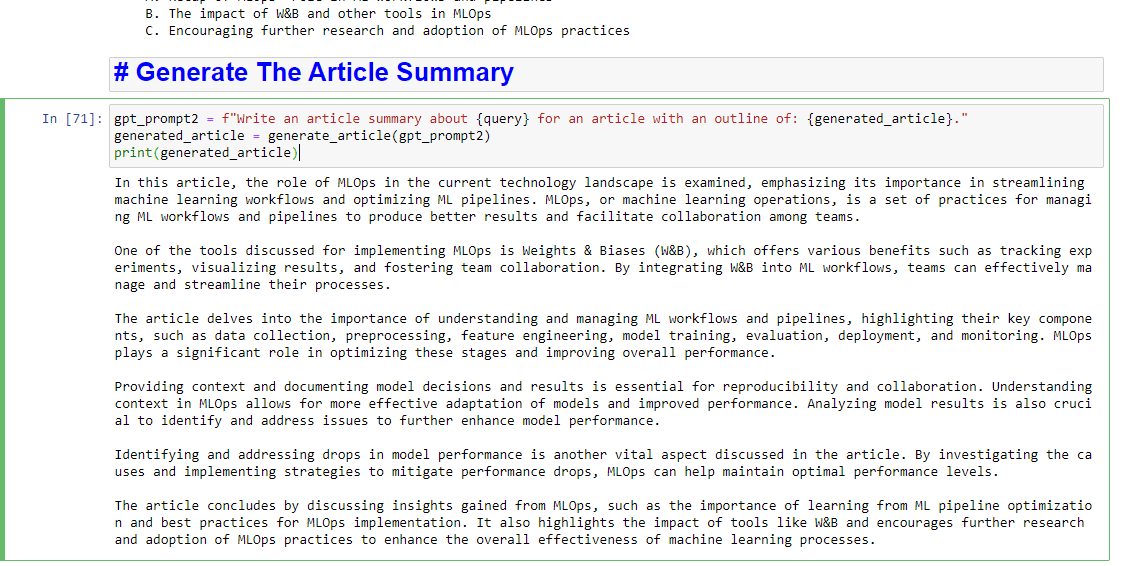

And if you’d also like to get a summary written up, you can add:

gpt_prompt2 = f"Write an article summary about {query} for an article with an outline of: {generated_article}."

generated_article = generate_article(gpt_prompt2)

print(generated_article)Which will produce something like:

The post How to use Google entities and GPT-4 to create article outlines appeared first on Search Engine Land.

via Search Engine Land https://ift.tt/b6CivMJ

No comments:

Post a Comment